Since its early revival in the 1990’s,1,2 consciousness research has advanced from descriptive correlations to mechanistic characterization, clarifying key requirements for conscious states such as neural complexity — a combination of integration and differentiation.3 Among the most powerful innovations to apply this refined knowledge is the Perturbational Complexity Index (PCI), a quantitative marker derived from transcranial magnetic stimulation combined with high-density electroencephalography (TMS-EEG).4 In this approach, brief magnetic pulses are delivered to the cortex to perturb ongoing activity while the resulting EEG responses are recorded across the scalp. Conscious wakefulness and dreaming during ketamine anesthesia or rapid eye movement (REM) sleep are distinguished by long-lasting, spatially distributed, and differentiated patterns of causal interactions. In contrast, unconscious states such as deep non-REM sleep or anesthesia due to different agents exhibit local, short-lived, and stereotyped responses.5–7 PCI compresses these spatiotemporal dynamics into a single complexity metric that reliably tracks the presence or absence of consciousness across physiological and pathological conditions.8 It is now undergoing multi-centric clinical studies validating its use for bedside assessment of unresponsive patients, including in the intensive care unit.9

Recent methodological refinements have also prompted a thorough re-evaluation of the relationship between consciousness and human cognition (here defined as the set of neuronal computations enabling purposeful behaviors).10 In particular, many studies have now started to employ awareness measures rigorously validated by subjective reports — most notably the Perceptual Awareness Scale (PAS). By design, the PAS captures graded changes in subjective experience that are directly validated by the subjects to correspond to their own phenomenological changes.11 Specifically, participants are presented with near-threshold stimuli and asked to construct a scale reflecting the different types of experiences elicited by this stimulus set. Across studies, this procedure consistently yields a graded scale comprising: no experience (PAS 1), a glimpse (PAS 2: subjects saw something but did not know what it was), followed by clear and at times also nearly clear experience (PAS 3–4). Crucially, PAS 2 is qualitatively distinct from PAS 3–4 in being non-categorical: it does not include perception of object identity. This non-binary structure of the original PAS is frequently overlooked in later adaptations that retain the PAS label while collapsing the scale into graded vividness ratings within predefined stimulus categories, without repeated participant-based validation (e.g.12,13 — an oversimplification that also underlies critiques portraying PAS as merely a linear measure of clarity for specific contents.14 Studies that criticized PAS to be subject to effects of criterion placement also used a binary version rather than its original validated version.12 Importantly, studies using PAS in its validated, non-binary form consistently show that both above-chance discrimination and subtler priming effects occur only when at least a minimal level of stimulus awareness is present15–18 (Figure 1A). In contrast, earlier reports of high-level cognition without awareness can largely be explained by reliance on unvalidated binary ratings, post-hoc selection of “unaware” trials, and resulting regression-to-the-mean artifacts.10,18–20 When these confounds are eliminated — by using graded scales, model-based analyses, and/or Bayesian approaches — stimulus awareness reliably predicts goal-directed behavioral performance.10

Converging clinical data reinforce a tight coupling between consciousness and purposeful behavior. In patients with disorders of consciousness, purposeful bedside behaviors such as visual tracking or command following (signaling a ‘minimally conscious state’ or MCS) are accompanied by high values of the PCI in roughly 95 % of cases8; Figure 1B) Together, PAS-based behavioral studies and PCI measurements converge on the conclusion that consciousness is likely a necessary prerequisite for most purposeful human behaviors.

Unlike overt behavior, robust neural activation can occur in the absence of subjective experience. During unconscious states such as focal-to-bilateral tonic–clonic seizures, for example, widespread gamma-frequency activity and neuronal firing increases have been documented by intracranial recordings.21 Even in conscious wakefulness, single-pulse TMS reliably evokes strong, highly location-specific EEG responses without typically inducing any content-specific change in conscious experience.22 Likewise, trials rated as “no experience” on the Perceptual Awareness Scale (PAS 1) may elicit substantial stimulus-evoked neural activity.23 Such observations highlight the need to determine which specific spatiotemporal features of brain dynamics are truly necessary for consciousness and for its behavioral manifestations. As in the case of behavioral studies, many neuroimaging investigations relied on binary awareness ratings (e.g.24–27) that have not been validated against subjective reports, limiting their interpretability.10 The advent of graded, validated awareness measures now enables more rigorous comparisons and helps disentangle neural signatures that uniquely index consciousness, those that drive purposeful behavior, and those that may overlap between the two.

Consciousness vastly exceeds behavior

Importantly, vivid, rich experiences can also occur without any behavioral expression. Consider the richness of ordinary experience: walking down a busy city street, we perceive a vast visual field of colors, contours, movement, and depths — buildings, people, animals, cars — accompanied by a medley of sounds and bodily sensations. Yet, at any given moment, we can report or act on only a few of these elements.28

Perhaps the most striking example of consciousness dissociated from function is the state of “pure presence” or “naked consciousness” — a state of vivid awareness devoid of thought, self, or perceptual objects.29 Reports from advanced meditators reaching this state after extended retreats describe a vivid sense of presence and vastness, with the mind silent and still.29 Because pure presence cannot be signaled as it occurs, it is reported retrospectively and reliably distinguished from other meditative or non-meditative states. Unlike drowsiness or dreamless sleep, pure presence involves heightened awareness. Recent findings link it to a distinctive neural signature marked by widespread reduction of cortico-thalamic activity.29 Such states exemplify consciousness entirely divorced from cognition: subjects are disconnected from the environment, lack self-awareness, do not think, and cannot act — a state of pure being without doing.

A related dissociation occurs in psychedelic states, which can produce vivid hallucinations30 or experiences akin to pure presence.31 At sufficiently high doses, consciousness becomes fully decoupled from intelligent performance. Similar phenomena occur in many forms of dreaming,32 or during intense pain or sudden loud stimuli, when cognition is momentarily overwhelmed by raw experience.

Finally, some patients with severe brain lesions are incapable of communicating, even though they show reproducible visual tracking or response to commands. Despite their very low level of responsiveness, strong evidence suggests they are vividly conscious.8,33,34 In these patients, most posterior-central cortical regions — associated with conscious states and many conscious contents in healthy adults — are relatively spared, while many prefrontal areas crucial for cognitive functions such as working memory or attention are often severely damaged. These patients retain sensory-evoked responses across both primary and associative posterior-central regions, and PCI — the most sensitive and specific empirically-validated electrophysiological index of consciousness,35 yields values comparable to those of healthy, awake individuals.33 Notably, a dissociation between focused attention and vivid subjective experience — also seen in neurotypical individuals36— is not only likely in non-communicative patients, but also commonly observed in communicative neurological patients with e.g., delirium37,38 or neurodegenerative diseases.39,40

Even when subjective experiences drive behavior, only a narrow fraction of their contents is expressed. Purposeful behavior generally depends on consciousness, yet the richness of experience far exceeds what actions reveal. For any given stimulus, behavior typically reflects only its categorical identity, leaving most perceptual details unexpressed. Our inability to recall or report all features and spatial locations within a scene stems from limits of memory and attention, not of consciousness itself: experience vastly exceeds access.28 Indeed, it is easy to show that we could not report individual items without also perceiving the visual groupings that compose them, nor perceive those groupings without seeing them bound to specific locations in the visual field — even if unnoticed or forgotten.28 Conversely, many cognitive operations triggered by conscious experience unfold outside awareness. For instance, prefrontal regions such as Broca’s area routinely translate vague intentions into fluent speech, while we remain entirely unaware of how all these computations occur.

To disentangle brain regions involved in consciousness from those mediating its consequences, recent research has focused on methods that separate neural activity underlying subjective experience from that driving unconscious cognitive processes. Refined paradigms now examine neural signatures of consciousness not only during stimulus presentation but also during spontaneous experiences such as dreams, hallucinations, and meditative states.29,32,41 During stimulus presentation, “no-report” paradigms, experimental designs that distill content-specific activity reproducible across different task contexts, and analyses focused on brain activity triggered by task-irrelevant or non-target stimuli — thus minimizing behavioral and cognitive confounds — further help isolate genuine signatures of consciousness.22,42,43 These approaches enable the identification of brain states uniquely associated with conscious experience (consistent tracking of a specific phenomenal contents across all conditions) and their distinction from processes mediating downstream cognitive effects.44,45 Once neural signatures of conscious contents are established, their complementary pathways to cognitive and behavioral outputs should be mapped to reconstruct the full causal chain. Clarifying the neural mechanisms that translate subjective experience into behavior is essential, both for improving rehabilitation in patients with covert consciousness33 and for elucidating causal links between consciousness and cognition in healthy and clinical populations.10

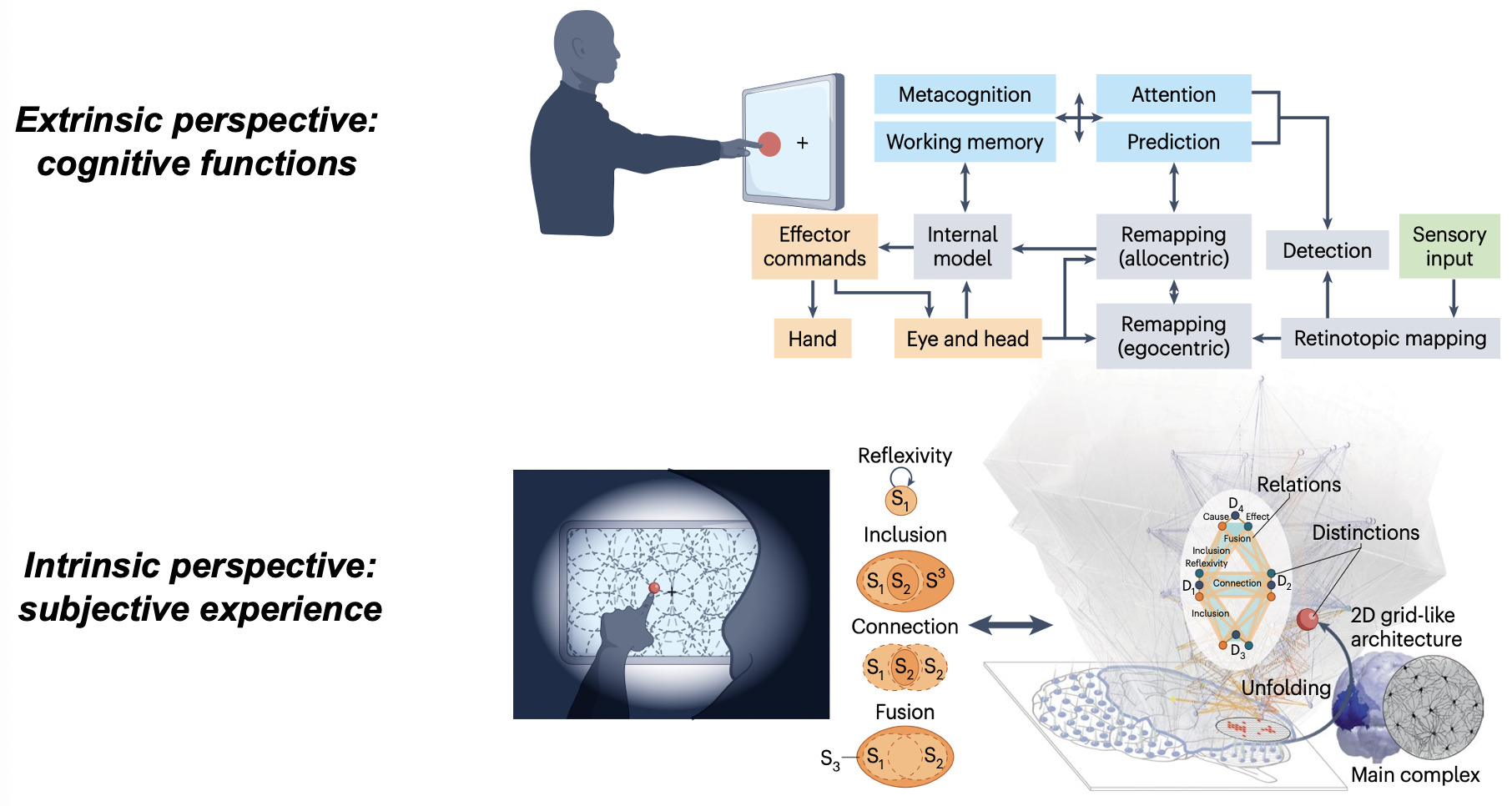

Explaining consciousness versus cognition: Intrinsic versus extrinsic perspectives

Beyond identifying neural signatures, consciousness and cognition demand fundamentally different explanatory frameworks. Cognitive neuroscience excels at describing the brain as an information-processing system, mapping input–output functions, internal states, and computational mechanisms from an extrinsic, third-person perspective. Yet this external view cannot account for why subjective experience accompanies neural operations.46 From such an extrinsic standpoint, consciousness can seem absent altogether, inviting some to deny its existence or treat it as an illusion.47

Nonetheless, the fact remains that brain activity sometimes supports experience and sometimes does not, as illustrated by the contrast between dreaming and dreamless sleep,48 or conscious versus unconscious brain activation during waking.10,23 Whether quietly contemplating a sunset or engaged in a demanding task, we may inhabit a richly structured inner world with spatial extendedness, temporal flow, objects binding general concepts to particular features, and vivid sensory qualities such as colors and sounds. Explaining the presence and qualitative character of such experiences carries profound ethical and clinical implications, informing decisions for patients with disorders of consciousness and shaping approaches to, for example, mental health and chronic pain.33,46 Because subjective experience is private and accessible only from the first-person perspective, its systematic study requires an intrinsically oriented science49,50 — one that characterizes the properties of experience through introspection and uses them as a starting point to derive principled explanations and generate testable predictions about their neural substrates.46

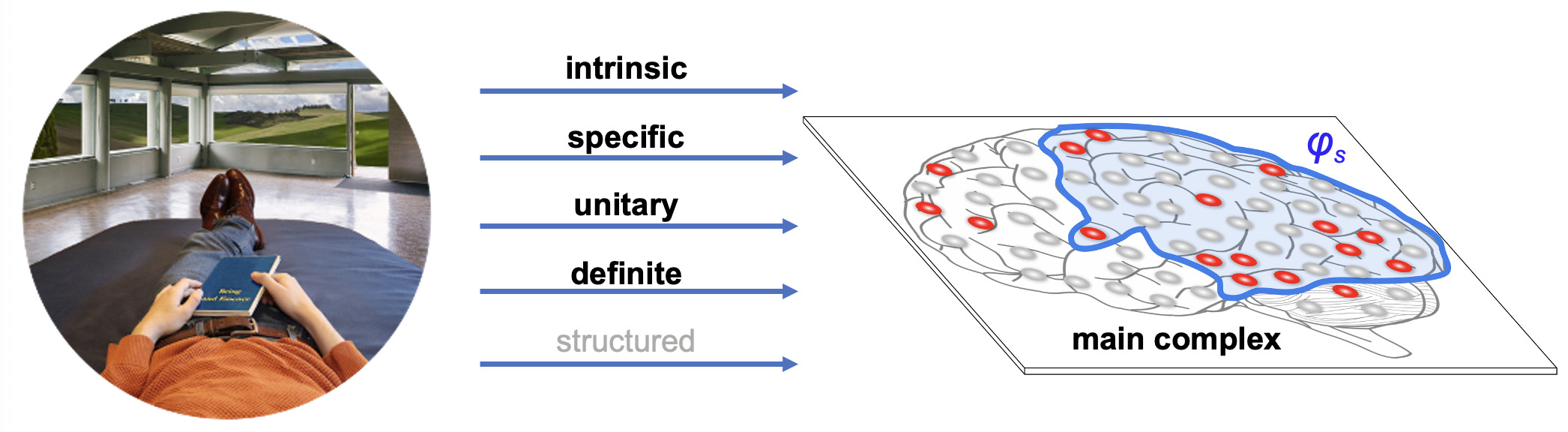

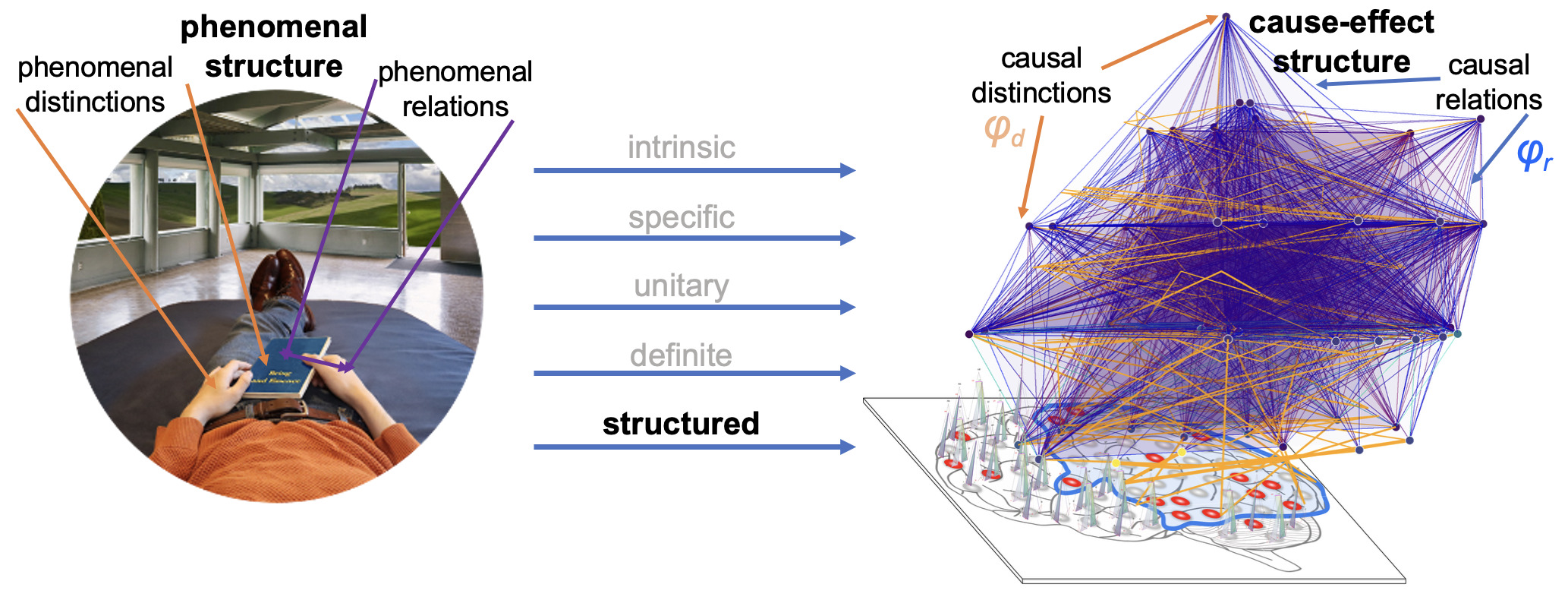

Integrated Information Theory (IIT) adopts this consciousness-first, intrinsically oriented strategy. It begins by identifying the properties that are shared by all subjective experiences — the essential properties of consciousness. In short, every experience is intrinsic (for the experiencer), specific (this one), unitary (a whole), definite (all of it), and structured (the way it is, composed by phenomenal distinctions bound by phenomenal relations). From there, IIT formulates the causal requirements that a physical substrate must satisfy to support consciousness, explicitly embedding these properties within its mathematical framework.

This consciousness-first, intrinsic approach has already led to testable links between consciousness and causal complexity, exemplified by the development of the Perturbational Complexity Index.46 It also predicts that consciousness arises in systems with maximal intrinsic cause–effect power and predicts which physical substrates could be able to support it. In humans, this maximum is expected in posterior-central cortical areas, whose dense hierarchical lattice of convergent–divergent connections among specialized units (with partially overlapping inputs and outputs) is especially conducive to high integrated information,46,51 unlike the more modular connectivity of much of the prefrontal cortex.52 Lesion, stimulation, and neuroimaging studies support this prediction, showing that posterior-central regions best predict both the presence and content of consciousness22,53 whereas the involvement of the prefrontal cortex is not required.22,54 In line with these findings, the recent Cogitate Consortium study44 provided convergent evidence that neither prefrontal cortex activation nor content-specific decodability is necessary for the experience of conscious content. Instead, content-specific activation and decoding that generalized across task contexts were consistently observed in the posterior cortex. Although the same study did not detect content-specific neural synchrony between primary and associative posterior cortices, the corresponding preregistered prediction was formally tested only in intracranial recordings, where sampling of early visual areas was extremely limited (12 electrodes across all patients versus 472 in the prefrontal cortex), yielding inconclusive Bayes factors (1.2–1.4) in favor of the null hypothesis. Ongoing theoretical work is also developing quantitative methods to map the boundaries of the cortical substrate of consciousness — defined as the region of maximal intrinsic cause–effect power — using high-resolution neuroimaging.55

The causal framework of IIT, explaining why only some substrates may be able to support consciousness, also constrains how neural activity relates to specific experience contents. In fact, much of IIT’s current research focuses on refining predictions concerning the neuronal substrates of fundamental experiential qualities,46,51,55–59 then challenging them through empirical studies.

IIT’s effort to explain the quality of different kinds of experiences through different kinds of cause–effect structures began with spatial experience — the feeling of extendedness.56 It predicts that such experiences are supported by ‘grid-like’ connectivity structures in topographically organized sensory areas.50,56 This prediction has been tested through spike-timing dependent plasticity experiments in the visual modality60 and is being further explored in adversarial collaborations.61 IIT also predicts that experiences of temporal flow depend on directed grid architectures,58 a claim now moving towards empirical testing. For hierarchical contents, such as faces or objects, IIT proposes that supporting networks should be able to specify high-level invariant concepts that bind to configuration-specific lower-level features, thanks to their rooted-tree connectivity.51 It further predicts that modality-specific qualities, such as colors “painted” on the canvas of visual space or sounds “played” on the score of time, are supported by local neuronal “cliques” attached to nodes of grid-like networks (specifying spatial or temporal experience structures) within modality-specific areas.51 Finally, IIT predicts that increased phase synchrony among activated units within the substrate of consciousness may signal phenomenal binding — a hypothesis now under active investigation in both human and animal studies.45,55,62

If consciousness and cognition can be dissociated, why do we need consciousness to engage in purposeful behavior?

We have seen that consciousness vastly exceeds cognition and can be dissociated from it. But we have also seen that we can hardly engage in any purposeful behavior unless we are conscious. IIT provides a potential explanation. In computer simulations, it can be shown that, under selective pressure and under constraints such as energy and wiring costs, the brains of simple “animats” evolve networks that are highly integrated — substrates that can support high values of integrated information.63 This is especially true if the environment is complex and adaptation requires memory and sensitivity to context. The reason is that a highly integrated substrate allows to pack many functions over a limited number of units, limiting energy expenditure and wiring costs. In maximally integrated networks, dense causal relations arising from overlapping inputs and outputs are also expected to enhance synchrony among activated units,51,62 creating a “synchronous advantage” that amplifies downstream effects of interconnected neural units.64,65

Yet these highly integrated substrates are also the ones that, according to IIT, satisfy the requirements for consciousness. Thus, while evolution selects for fitness rather than consciousness, systems with high Φ may naturally arise from energetic and computational constraints. Such selective pressures may explain how human brains perform a wide range of tasks while remaining vastly more energy efficient than artificial systems — by recent estimates, approximately 9 × 10⁸ times more so.66

Conclusion

Research at the intersection of consciousness and cognition is entering an especially productive phase, with the potential to bridge their long-standing divide and open new experimental paths in both healthy and clinical populations. These advances may deepen understanding of mind–brain relationships while informing clinical care34 and the broader pursuit of human flourishing.67

Funding Sources

MB is supported by NIH1R01NS138257, NIH1R41NS137850, NIHK23NS112473, DARPA HR00112320033, ARPA-H TDL 24-ICHUB-00010, TWCF0646, TWCF30258 and the Tiny Blue Dot Foundation.

Acknowledgements

MB wants to thank Giulio Tononi for many discussions over the years and constructive feedback on the manuscript, Benedetta Cecconi for helpful feedback, and Matteo Grasso and Jeremiah Hendren for assistance with the figures.

Conflicts of Interest

MB has no conflicts of interest to declare.

_s_research_program.png)

_s_research_program.png)